The Great AI Power Shortage

The Structural Energy Shortage Inside AI Data Centers driving an energy boom

We keep arguing about NVIDIA supply.

The real constraint is the grid.

Compute-hours are not governed by a single supply curve. Compute-hours are the output of stacked bottlenecks. And the slowest layer in that stack — by far — is power.

You can spin up GPUs in months.

You cannot spin up gigawatts in months.

Compute overbuild compresses margins.

Power overbuild creates optionality.

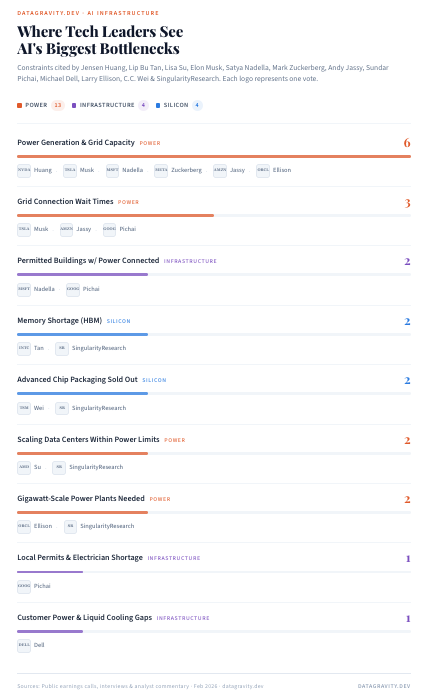

We analyzed public commentary from leading AI operators and hyperscalers and mapped the bottlenecks they consistently cite across the stack.

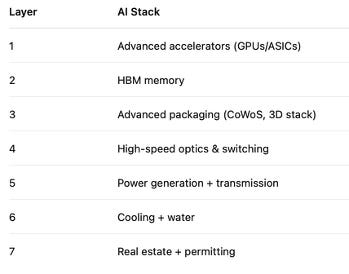

These constraints arise from a tightly coupled stack:

Each layer has independent lead times and independent bottlenecks. When constraints stack, supply elasticity collapses. Even if GPU output doubles, effective compute-hours can remain scarce.

And that scarcity is not evenly distributed.

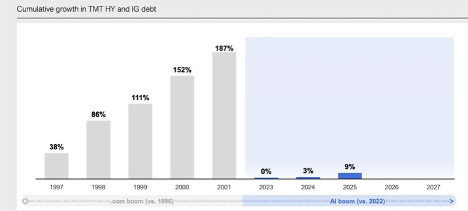

The Fiber Analogy Is Incomplete

There are clear parallels to the late-1990s fiber buildout:

~$500B in telecom infrastructure investment

39 million miles of fiber laid

<5% utilization at the 2001 peak

A decade to absorb excess capacity

But there are two critical differences:

Fiber could sit idle cheaply.

The AI buildout is funded by hyperscaler balance sheets, not junk debt.

Source: Coatue chart of the day

Fiber could sit idle at near-zero marginal cost.

AI infrastructure burns cash every day it sits unused.

Power contracts

Cooling costs

Staffing

Depreciating GPUs

Compute depreciates in ~5-8 years. Fiber depreciated over decades.

Which brings us to the most important constraint in the stack:

Power.

Power is the binding constraint in AI

Unlike GPUs:

Power is location-bound.

Interconnection queues take years.

Transmission upgrades take longer.

Generation requires long-term offtake.

Permitting is political and regional.

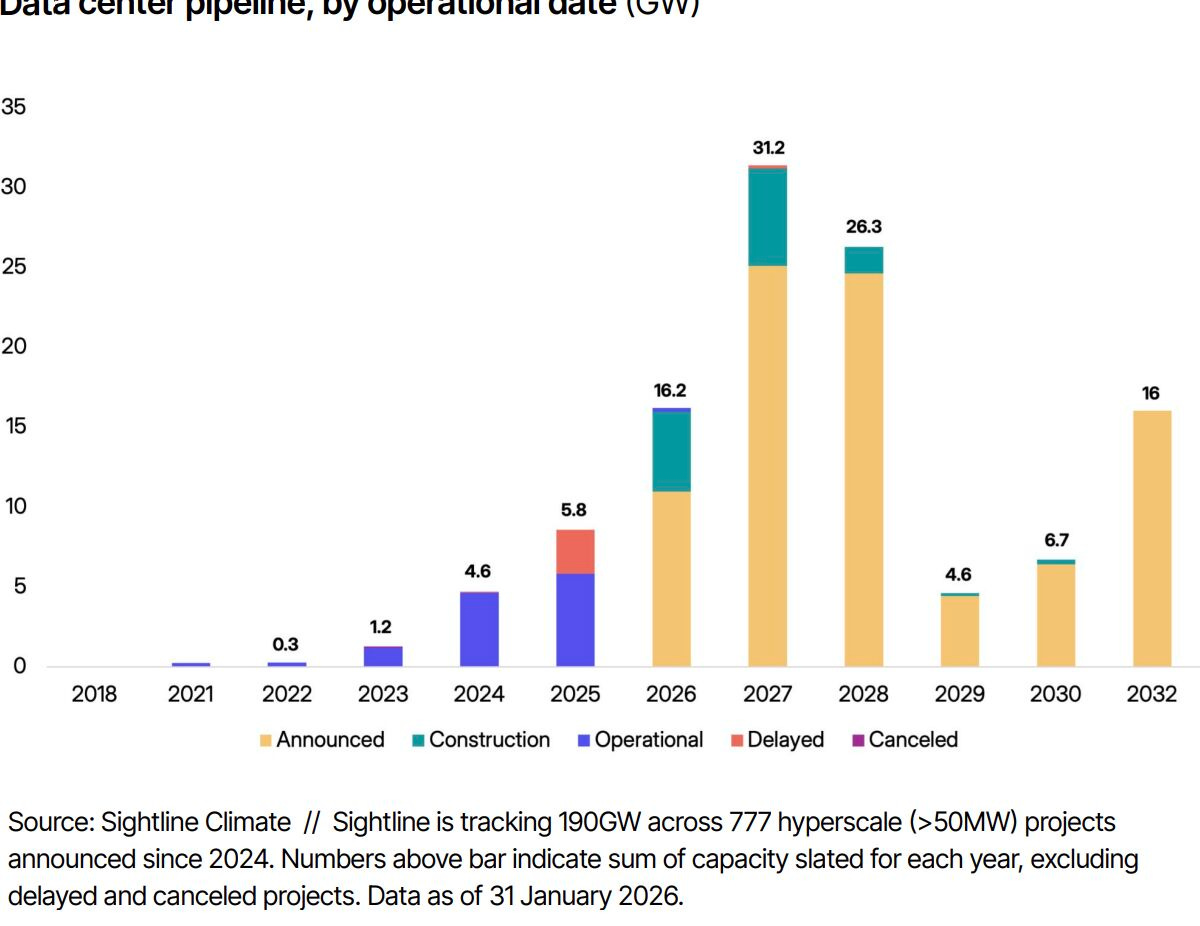

You cannot spin up gigawatts the way you spin up GPU clusters. A GPU order cycle is measured in quarters. A transmission upgrade is measured in years.

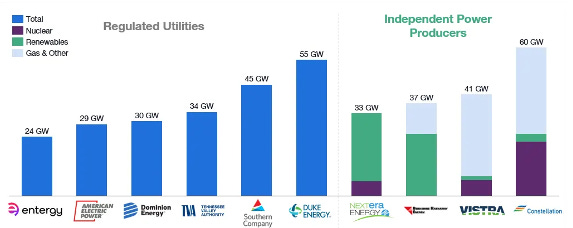

In many U.S. regions, power availability is already the binding constraint on new AI campuses. Each major data center operator is rushing to lock up as much cheap power as they can.

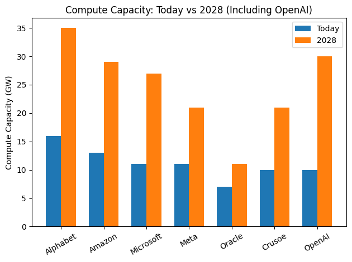

Source: Public estimates

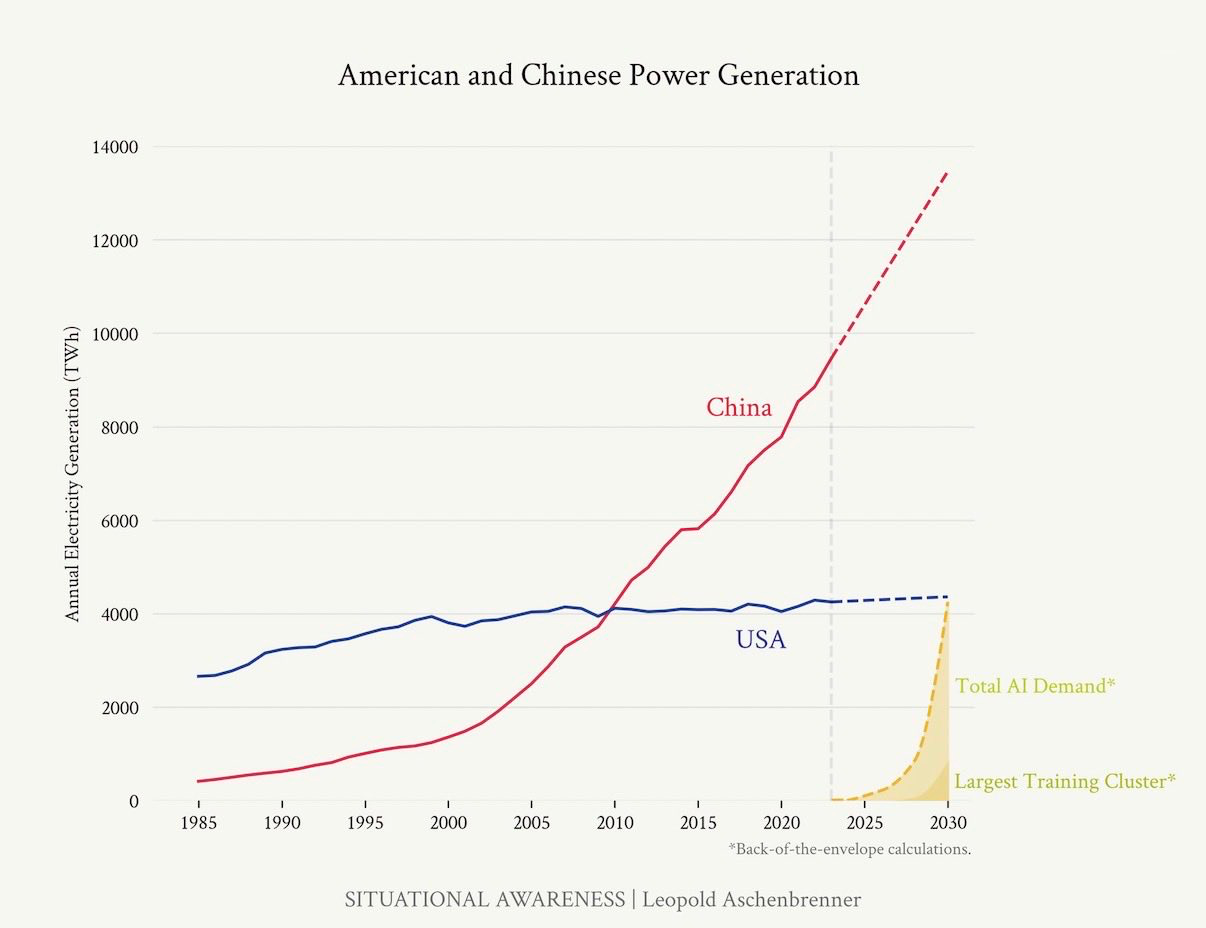

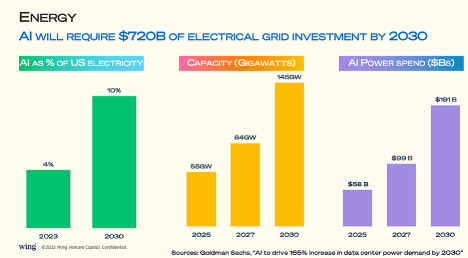

Last year we projected AI data centers would reach:

10% of U.S. grid demand

~145 GW by 2028

New bank estimates suggest we hit those numbers before 2027 .

That is not a cyclical constraint.

That is structural.

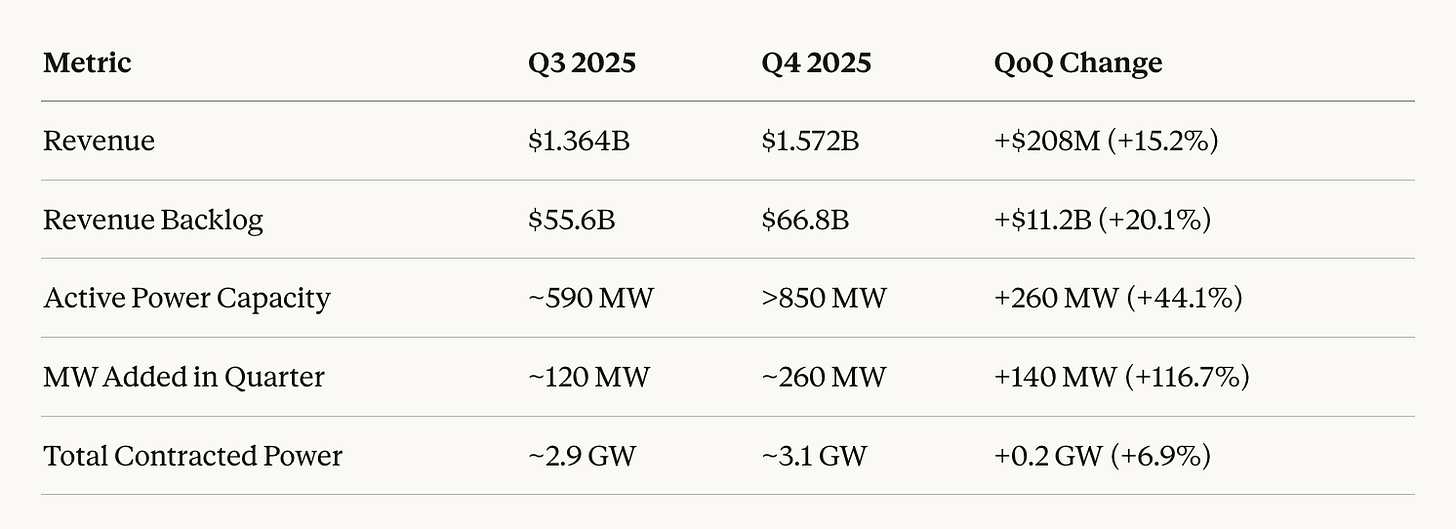

As an example, CoreWeave’s revenue backlog is scaling faster than its contracted power footprint. Commitments can scale in months. Power cannot.

Power Is Not a Commodity — It’s a Heterogeneous Stack

Deliverable megawatts — firm, high-density, grid-connected — are the scarce asset.

Electricity differs by:

Baseload vs peaking

Dispatchability

Intermittency

Transmission congestion

Regulatory structure

Geographic deliverability

A firm nuclear-backed megawatt co-located with transmission is not equivalent to intermittent solar behind congestion.

As AI campuses move toward multi-gigawatt scale, these differences become economically decisive.

The U.S. grid is not one market. It is ~1,700 fragmented micro-markets with political overlays.

For hyperscalers, that means:

Tariff fragmentation

Interconnection queue risk

Congestion pricing exposure

Regional political constraints

Further, there are geographical nuances. Texas has more new GW of data centers than all other states combined. Equity markets are re-rating toward regions with power headroom. Texas-heavy utilities (NRG Energy, Constellation Energy) and landholders (Texas Pacific Land) have become indirect AI beneficiaries. Access to power capacity, where it is, and what type of power is a key determinant of which energy players will thrive.

Source: Coatue chart of the day

The Inference Shift Changes Everything

Training is episodic CAPEX. Inference is ongoing load.

Inference-heavy facilities are provisioned with headroom for:

Peak traffic events

Failover redundancy

Geographic routing

Grid instability

Future expansion

But utilization fluctuates:

Model efficiency gains

Traffic routing shifts

Customer concentration

Product cycles

The result: unused firm capacity.

This is the power analog to idle reserved GPU instances.

We’ve already seen compute marketplaces emerge to manage overbuild risk.

Power is next.

Power Is Becoming Financialized

Long-duration power contracts are starting to resemble financial instruments.

We are beginning to see:

Subleasing of contracted power blocks

Transfer of interconnection rights

Monetization of demand flexibility

Bundled “power + compute” offerings

The next logical step is standardized contracts, clearinghouses, and secondary liquidity.

This is where structural opportunity emerges.

The Strategic Example: Vertical Integration

Alphabet recently paid ~$5B to acquire Intersect Power (founded 2016), a large-scale clean energy developer.

Intersect develops and operates:

Utility-scale solar

Battery storage

Hybrid generation

The acquisition vertically integrates generation with AI infrastructure — reducing basis risk, securing long-term supply, and internalizing power strategy.

This is not an energy investment. It is a compute hedge.

Power is no longer a utility expense. It is a competitive input.

The Venture Opportunity: The “Prime Broker” of Power

The market structure around AI power procurement is immature.

The bottleneck is measurable.

The durable venture layer is not steel and concrete. It is:

Transaction rails

Portfolio optimization software

Structured finance

Liquidity layers

What does not exist yet:

A robust secondary clearinghouse for:

Capacity blocks

Interconnection rights

Long-duration contracted megawatts

If inference overbuild materializes, secondary liquidity becomes mandatory.

Why Power Scarcity Persists Even in Overbuild Scenarios

Here’s the key insight:

Even if GPUs overshoot demand by 5–10 years…

Power does not.

Power is:

Regulated

Capital intensive

Politically constrained

Slow to expand

Memory is cyclical.

Compute pricing compresses.

Power availability remains sticky.

Scarcity shifts pricing power upstream — into the physical layer.

The Structural Feedback Loop

AI lowers the cost of producing software and services.

Lower costs → more adoption.

More adoption → more inference.

More inference → more infrastructure.

More infrastructure → more power demand.

And the more this loop accelerates, the more valuable deliverable megawatts become.

What Happens If We Overbuild?

The central risk is not that AI demand disappears.

It is that parts of the stack overshoot by years.

But here’s the asymmetry:

Idle GPUs can be redeployed.

Idle fiber sits cheaply.

Idle power commitments are expensive.

Compute overbuild compresses margins.

Power overbuild creates optionality.

Those are different regimes.

The Clean Thesis

Power is the lowest-variance, longest-duration constraint in the AI compute stack.

It is:

Slow moving

Structurally regulated

Fragmented

Financializing

Becoming programmable

And unlike models or agents, it does not get commoditized by algorithmic improvement.

For venture investors obsessed with model layers, the more durable wedge may sit beneath them:

The transaction, orchestration, and financial infrastructure of energy.

Because wherever portfolios form, software follows.

AI infrastructure is becoming a power portfolio management problem.

And wherever portfolios exist — financial infrastructure follows.